Various Quantum computer academics are still trying to downplay the claim that Google made that Dwave’s quantum annealing system can be 100 million times faster Matthias Troyer of the Swiss Federal Institute of Technology in Zurich. “This is 100 million times faster than some specific classical algorithm on problems designed to be very hard for that algorithm but easy for D-Wave.” In other words, the D-Wave had a massive home advantage.

Better versions of the simulated annealing algorithm can reduce this advantage to just 100 times faster, says Troyer, while other more complex algorithms running on an ordinary PC can beat D-Wave entirely. “A claim of ’100 million speedup’ is thus very misleading,” he says.

You should also keep in mind that the D-Wave is a specialised piece of hardware that costs around $10-15 million. As the Google team admit in a paper detailing their work, a similarly specialised but non-quantum device might be able to match the current D-Wave computer, though they think it isn’t worth exploring this avenue as they believe larger quantum computers will soon exceed these capabilities.

Google’s Neven admits at the end of his blog post that other algorithms can beat the D-Wave, but the Google team think this advantage will disappear as quantum computers get larger.

Others are less sure. “This is certainly the most impressive demonstration so far of the D-Wave machine’s capabilities,” says Scott Aaronson of the Massachusetts Institute of Technology. “And yet, it remains totally unclear whether you can get to what I’d consider ‘true quantum speedup’ using D-Wave’s architecture.”

Will we ever have definitive proof of speedy quantum computers?

Maybe – but it is likely to require new hardware. D-Wave’s goal has always been to get quantum computers to market as fast as possible, but Aaronson thinks their “quantum coherence” – a measure of the fragile quantum states necessary for computation – isn’t as good as that found in quantum chips built by teams who are taking a more slow and steady approach

Google is hedging its bets – it has hired external researchers to build its own quantum chip. And IBM recently received US government funding to develop its own version.

Both of these seem more promising, says Aaronson. “Things are happening a good deal faster than I’d expected,” he says. “But it remains as important as it ever was to separate out the marketing buzz from what’s actually been shown.”

Google and Dwave 100 million speedup tests research paper were with 1000 qubits but they have 2048 physical qubits in the lab already

Dwave’s washington chip has 2048 physical qubits but they usually activate about 1152 qubits.

It seems clear that in 2016, the Dwave and Google research work will be with 2000 qubits. The unpublished work now is probably with 2000 qubits. By late 2016 or early 2017, there will be 4000 qubit work.

There are revised designs with more connections and lower temperature performance and other physical design improvements.

There does not seem to be any reason that Dwave could not rapidly integrate any new superconducting materials and designs that result from research at IARPA, IBM, Google or other places.

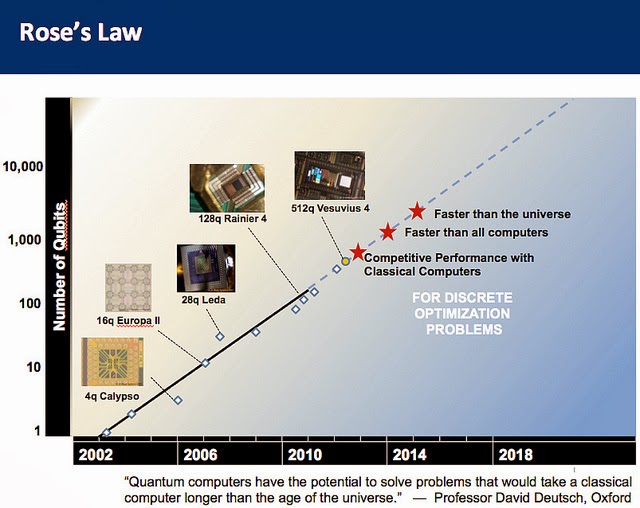

Dwave saw that between that 128 qubit and 512 qubit, there was a 300,000x improvement in performance. That kind of performance gain is really unprecedented.

It seems clear that the 4000 qubit systems will utterly crush any possible classical computing approach regardless of dedicated ASIC hardware or the best algorithms.

The slow and steady approaches are not getting tens of millions of dollars in sales and hundreds of millions in funding.

Jerry Chow, a program manager for IBM Research’s Experimental Quantum Computing unit, working on a quantum computer. (IBM)

The UK recently announced a £270 million investment to bring quantum technology – including a quantum computer – to market by 202

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.