1. A Digital Neurosynaptic Core Using Embedded Crossbar Memory with 45pJ per Spike in 45nm (4 pages) They use the chip to get 89-94% accuracy for image recognition and classification.

The grand challenge of neuromorphic computation is to develop a flexible brain-like architecture capable of a wide array of real-time applications, while striving towards the ultra-low power consumption and compact size of the human brain—within the constraints of existing silicon and post-silicon technologies. To this end, we fabricated a key building block of a modular neuromorphic architecture, a neurosynaptic core, with 256 digital integrate-and-fire neurons and a 1024X256 bit SRAM crossbar memory for synapses using IBM’s 45nm SOI process. Our fully digital implementation is able to leverage favorable CMOS scaling trends, while ensuring one-to-one correspondence between hardware and software. In contrast to a conventional von Neumann architecture, our core tightly integrates computation (neurons) alongside memory (synapses), which allows us to implement efficient fan-out (communication) in a naturally parallel and event-driven manner, leading to ultra-low active power consumption of 45pJ/spike. The core is fully configurable in terms of neuron parameters, axon types, and synapse states and is thus amenable to a wide range of applications. As an example, we trained a restricted Boltzmann machine offline to perform a visual digit recognition task, and mapped the learned weights to our chip.

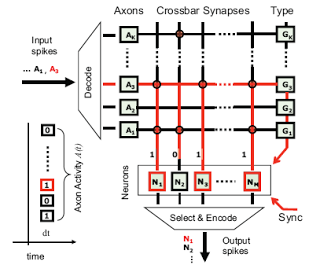

Internal blocks of the core include axons (A), crossbar synapses implemented with SRAM, axon types (G), and neurons (N). An incoming address event activates axon 3, which reads out that axon’s connections, and results in updates for neurons 1, 3 and M.

A long standing goal in the neuromorphic community is to create a compact, modular block that combines neurons, large synaptic fanout, and addressable inputs. Our breakthrough neurosynaptic core, with digital neurons, crossbar synapses, and address-events for communication, is the first of its kind to achieve this long standing goal in working silicon. The key new component of our design is the embedded crossbar array, which allows us to implement synaptic fanout without resorting to off-chip memory that can create an I–O bottleneck. By bypassing this critical bottleneck, it is now possible to build a large on-chip network of neurosynaptic cores, creating an ultra-low power neural fabric that can support a wide array of real-time applications that are one-to-one with software. Looking forward, to build a human-scale system with 10^14 synapses (distributed across many chips), our next focus is to tackle the formidable but tractable challenges of density, passive power, and active power for inter-core communication.

Efforts to achieve the long-standing dream of realizing scalable learning algorithms for networks of spiking neurons in silicon have been hampered by (a) the limited scalability of analog neuron circuits; (b) the enormous area overhead of learning circuits, which grows with the number of synapses; and (c) the need to implement all inter-neuron communication via off-chip address-events. In this work, a new architecture is proposed to overcome these challenges by combining innovations in computation, memory, and communication, respectively, to leverage (a) robust digital neuron circuits; (b) novel transposable SRAM arrays that share learning circuits, which grow only with the number of neurons; and (c) crossbar fan-out for efficient on-chip inter-neuron communication. Through tight integration of memory (synapses) and computation (neurons), a highly configurable chip comprising 256 neurons and 64K binary synapses with on-chip learning based on spike-timing dependent plasticity is demonstrated in 45nm SOI-CMOS. Near-threshold, event-driven operation at 0.53V is demonstrated to maximize power efficiency for real-time pattern classification, recognition, and associative memory tasks. Future scalable systems built from the foundation provided by this work will open up possibilities for ubiquitous ultra-dense, ultra-low power brain-like cognitive computers.

In this paper, we demonstrated a highly configurable neuromorphic chip with integrated learning for use in pattern classification, recognition, and associative memory tasks. Through the use of digital neuron circuits and a novel transposable crossbar SRAM array, this basic building block addresses the computation, memory, and communication requirements for large-scale networks of spiking neurons and is scalable to advanced technology nodes. Future systems will build on this base to enable ubiquitously deployable ultradense, ultra-low power brain-like cognitive computers.

If you liked this article, please give it a quick review on ycombinator or StumbleUpon. Thanks

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.