During the past several years an increasing number of AI researchers, including Ben Goertzel and Itamar Arel, as well as Josh Hall and Peter Voss have all predicted that human-level AIs could emerge within the next decade. While not quite so sanguine, Sandia National labs researcher Brandon Rohrer believes that creating an AI with a canine level of intelligence could happen within the next ten years. In an interview with Sander Olson, Rohrer describes his approaches to the reinforcement learning problem, “deep learning”, and how a human level AI might be created within the next twenty years.

Question: Does Sandia national labs have an AGI program?

I just completed a three year, internally funded AGI-related project, so at the moment I am only working on AGI evenings and weekends. My day work consists of other research. Like most AGI researchers, I am seeking outside sources of R and D funding. Sandia does have a cognitive modeling group, but is not explicitly engaged in funding AGI research.

Question: What is the Brain Emulating Cognition and control Architecture (BECCA)?

BECCA is an attempt to solve what is known as the reinforcement learning problem. BECCA differs from other reinforcement learning approaches by learning features on the fly. This approach differs from standard neural nets by learning faster – it forms associations a single exposure, much quicker than alternate approaches.

Question: To what extent can BECCA scale?

I believe that it will become massively scalable. The computation time scales approximately linearly with complexity, which is a major advantage of the architecture. If BECCA scaled exponentially in the manner of many AGI programs, the computational requirements would quickly become unmanageable.

Question: It would seem that BECCA would be well suited to GPU computing.

It should be possible to speed BECCA up to some extent using GPU computing. It isn’t clear whether portions of BECCA will be portable to the massively parallel architectures that GPUs provide. I haven’t spent much time optimizing BECCA’s speed – my focus has been getting the algorithms up to speed. Recompiling code to make it amenable to GPU computing isn’t my forte.

Question: Can a system using BECCA become intelligent?

Not in its current form. But the system is quite scalable, and if it could scale to a dog’s level of intelligence I would be ecstatic.

Question: How would you respond to AGI critics who claim that the brain is too complex to fully understand?

We are decades away from being able to comprehensively understand the workings of the human brain. My goal is more modest, which is to make an artifact that subjectively “feels” smart to a human observer. I’d like to give this artifact progressively higher level and more general goals.

Question: What is a Feature creation, or “deep learning”?

Feature creation involves taking lower level inputs, such as edge segments, and organizing them into higher level objects, such as an intersection. At the highest level, the feature could be a picture of something. So the computer has to turn a large number of lower level inputs such as edges into a high level picture, such as a dog. “Deep learning” has the same overall goals.

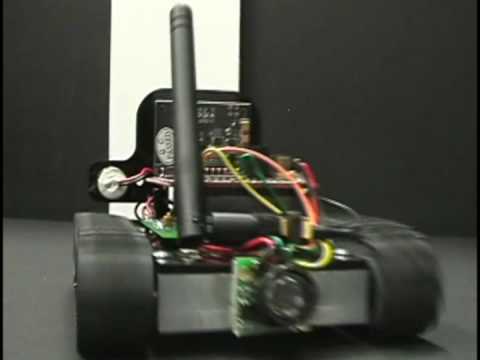

Question: Do you have specific near or mid-term goals for BECCA?

I have made a conscious effort to make BECCA hardware based, so that it is directly applicable to robotics. One of the first problems that I would like to solve is the search and retrieve problem. I describe an object, and the robot will go and find the object on its own. If I had a robot doing that within five years, I would be thrilled.

Question: Do you believe in the concept of a technological singularity?

I believe that the concept of a soft takeoff is much more plausible than the concept of a hard takeoff. Even the internet, which seemed to come out of nowhere, took decades to fully develop. So I see it being a gradual process more than an abrupt transition. I bet that we will have human-level AI within the next fifty years.

Question: What is your assessment of Ben Goertzel’s OpenCog, and other non-emulation based approaches?

Ben and Itamar [Arel] are both good friends of mine, and we have discussed these subjects extensively. Ben and I have differing views of the nature of intelligence, and so he is aiming for something different. But if Itamar’s Destin works well, I could definitely see a collaboration between DesTIN and BECCA. I am less familiar with Goertzel’s OpenCOG, so I don’t know if a collaboration between the two architectures would work.

Question: Is the AI field limited more by insufficient resources, or by insufficient/incorrect theories?

Researchers always feel resource constrained, and I would like to see more AGI approaches garner funding. It may be that some genius already has figured out the secret of AGI but is languishing for lack of money. If I could spend full time on AGI research, my work would go considerably faster. Having said that, I suspect that the algorithms for intelligence may be quite simple.

Question: What level of AI could we expect within the next ten years?

I would be thrilled to see a robot with a dog’s level of intelligence within a decade. We should be able to communicate with this robot in plain english, and give it general commands. Within twenty years, we may even see human level AI, although that is far more uncertain. I am thrilled by the prospect that truly intelligent machines may actually emerge within my lifetime.

If you liked this article, please give it a quick review on ycombinator or StumbleUpon. Thanks