Research groups at leading universities and companies, including Google, Microsoft and IBM, are part of a worldwide race to realise the first quantum computer that crosses into the ‘quantum computational singularity’ or quantum supremacy.

This represents a point where quantum computers become faster than any non-quantum supercomputer. Quantum computers have vastly better speed scaling. So once quantum computers surpass classical computers then quantum computers will remain dominant.

A team of scientists from Bristol have discovered that the boundary to this singularity is further away than previously thought.

The results apply to a highly influential quantum algorithm known as ‘boson sampling’, which was devised as a very direct route to demonstrate quantum computing’s supremacy over classical machines.

The boson sampling problem is designed to be solved by photons (particles of light) controlled in optical chips – technology pioneered by Bristol’s Quantum Engineering and Technology Labs (QETLabs).

Predicting the pattern of many photons emerging from a large optical chip is related to an extremely hard random matrix calculation.

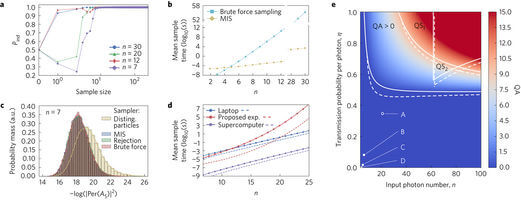

With the rapid progress in quantum technologies, it appeared as though a boson sampling experiment that crossed into the quantum computational singularity was within reach. However, the Bristol team were able to redesign an old classical algorithm to simulate boson sampling, with dramatic consequences.

Dr Anthony Laing, who heads a group in QETLabs and led this research, said: “It’s like tuning up an old propeller aeroplane to go faster than an early jet aircraft.

“We’re at a moment in history where it is still possible for classical algorithms to outperform the quantum algorithms that we expect to ultimately be supersonic.

“It was believed that 30 or even 20 photons would be enough to demonstrate quantum computational supremacy.”

Yet he was able to simulate boson sampling for 20 photons on his own laptop, and increased the simulation size to 30 photons by using departmental servers.

Alex added: “With access to today’s most powerful supercomputer, we could simulate boson sampling with 50 photons.”

“But demonstrating such a feat [finding or creating better classical algorithms] meant assembling a crack team of scientists, mathematicians, and programmers.”

It is predicted that quantum computers will dramatically outperform their conventional counterparts. However, large-scale universal quantum computers are yet to be built. Boson sampling is a rudimentary quantum algorithm tailored to the platform of linear optics, which has sparked interest as a rapid way to demonstrate such quantum supremacy. Photon statistics are governed by intractable matrix functions, which suggests that sampling from the distribution obtained by injecting photons into a linear optical network could be solved more quickly by a photonic experiment than by a classical computer. The apparently low resource requirements for large boson sampling experiments have raised expectations of a near-term demonstration of quantum supremacy by boson sampling. Here we present classical boson sampling algorithms and theoretical analyses of prospects for scaling boson sampling experiments, showing that near-term quantum supremacy via boson sampling is unlikely. Our classical algorithm, based on Metropolised independence sampling, allowed the boson sampling problem to be solved for 30 photons with standard computing hardware. Compared to current experiments, a demonstration of quantum supremacy over a successful implementation of these classical methods on a supercomputer would require the number of photons and experimental components to increase by orders of magnitude, while tackling exponentially scaling photon loss.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.