How can a net decipher and label patterns that change with time? For example, could a net be used to scan traffic footage and immediately flag a collision? Through the use of a recurrent net, these real-time interactions are now possible.

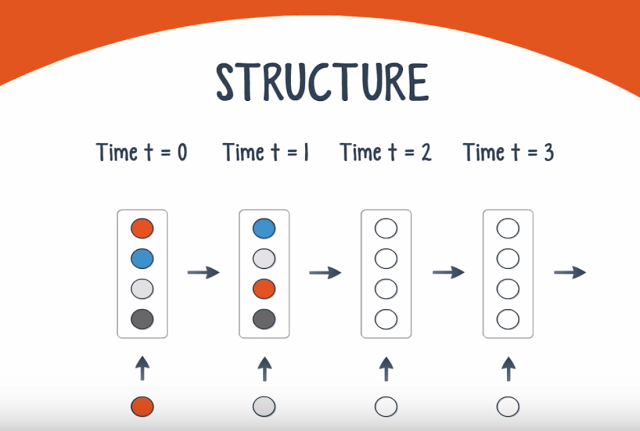

The Recurrent Neural Net (RNN) is the brainchild of Juergen Schmidhuber and Sepp Hochreiter. The three deep nets we’ve seen thus far – MLP, DBN, and CNN – are known as feedforward networks since a signal moves in only one direction across the layers. In contrast, RNNs have a feedback loop where the net’s output is fed back into the net along with the next input. Since RNNs have just one layer of neurons, they are structurally one of the simplest types of nets.

Like other nets, RNNs receive an input and produce an output. Unlike other nets, the inputs and outputs can come in a sequence. Here are some sample applications for different input-output scenarios:

– Single input, sequence of outputs: image captioning

– Sequence of inputs, single output: document classification

– Sequence of inputs, sequence of outputs: video processing by frame, statistical forecasting of demand in Supply Chain Planning

RNNs are trained using backpropagation through time, which reintroduces the vanishing gradient problem. In fact, the problem is worse with an RNN because each time step is the equivalent of a layer in a feedforward net. Thus if the net is trained for 1000 time steps, the gradient will vanish exponentially as it would in a 1000-layer MLP.

There are different approaches to address this problem, the most popular of which is gating. Gating takes the output of any time step and the next input, and performs a transformation before feeding the result back into the RNN. There are several types of gates, the LSTM being the most popular. Other approaches to address this problem include gradient clipping, steeper gates, and better optimizers.

GPUs are an essential tool for training an RNN. A team at Indico compared the speed boost from using a GPU over a CPU, and found a 250-fold increase. That’s the difference between 1 day and over 8 months!

A recurrent net has one additional capability – it can predict the next item in a sequence, essentially acting as a forecasting engine.

SOURCE – Youtube [Jagannath Rajagopal (Creator, Producer and Director), Nickey Pickorita (YouTube art), Isabel Descutner (Voice), Dan Partynski (Copy Editing) -, Marek Scibior (Prezi creator, Illustrator) )

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.