Rob Mauer of Tesla Daily has some highlight notes from the X.AI twitter space and Farzad has a supercut of the Twitter space.

It sounds like Elon said that Tesla work on AI seems to have given them insights into AGI. Tesla over-complicated the problem. This suggests that simplifying and scaling up their systems could be the answer to AGI. I believe this ties in with the recent Tesla work to unify all FSD and Teslabot neural net software and to scale as rapidly as possible to 100 Exaflops.

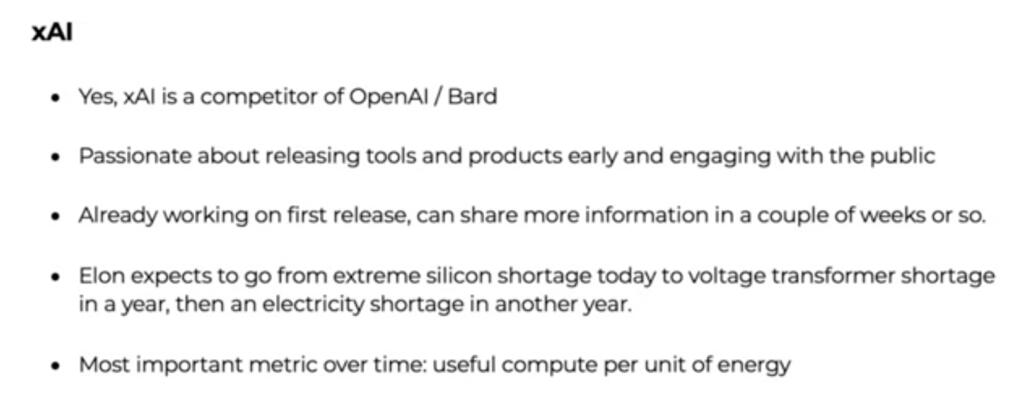

Tesla and X.AI will work together but in an arms lengths way.

Tesla Hardware 4 will be 3-5 times more capable than Hardware 3 and Hardware 5 will be 4-5X times more capable than Hardware 4.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

One thing I’d like to see:

Ask an AI how to get to space…but DON’T mention the existence of rockets.

See what it comes up with.

Maybe by withholding info old problems can get new solutions

Curiosity is definitely not sufficient. Humans are only really interesting to other humans.

I really wonder if “Curiousity” is sufficient to make a “nice enough” Super-human AGI.

Mengele was presumably very curious…

The doctors that tested the effects of syphilis on blacks were presumably very curious…

A child may very curious to observe ants when burning them with a magnifying glass.

The problem lies in them not caring much about the object of their curiousity.

Do we want humanity to survive as interesting specimins in a bunch of giant petri dishes?

Hm… I wonder what Elon Musk meant about overcomplicating the FSD problem? Probably that they should have gone for end-to-end training right away instead of making all these elaborate models of perception.

And, I personally don’t think that FSD is solved, only that Tesla has reached an equivalent state of performance with end-to-end training (which will be release 12.0). God knows that Tesla should have enough clips to make end to end training. Probably several billion clips.