Vicuna-13B, an open-source chatbot trained by fine-tuning LLaMA on user-shared conversations collected from ShareGPT. Preliminary evaluation using GPT-4 as a judge shows Vicuna-13B achieves more than 90%* quality of OpenAI ChatGPT and Google Bard while outperforming other models like LLaMA and Stanford Alpaca in more than 90%* of cases. The cost of training Vicuna-13B is around $300. The training and serving code are publicly available for non-commercial use.

The Vicuna code is at FastChat’s Github.

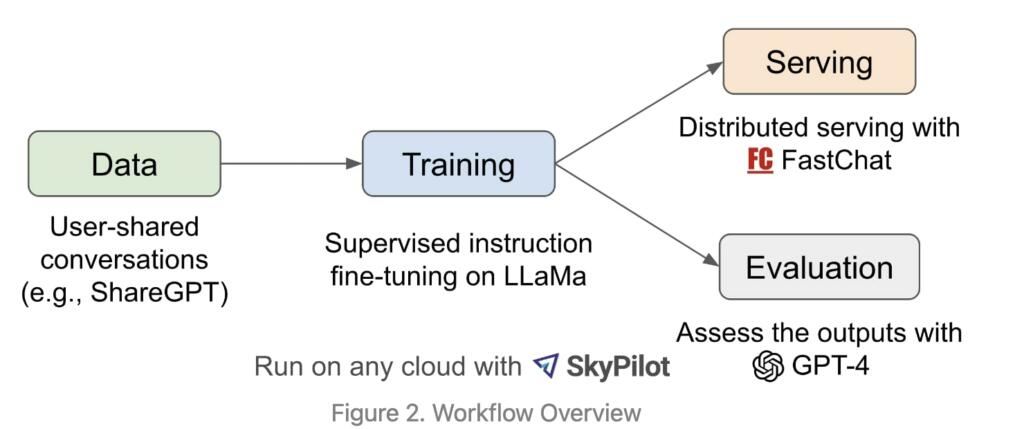

Vicuna-13B is trained on user-shared conversations from ShareGPT and fine-tuned on LLaMA. It is backed by an enhanced dataset and an easy-to-use, scalable infrastructure.

The results are impressive and are comparable to the responses of the ChatGPT. Vicuna 13B is an impressive model and could be a potential competitor to other Open-sourced models. Preliminary evaluation using GPT-4 as a judge shows Vicuna-13B achieves more than 90%* quality of OpenAI ChatGPT and Google Bard while outperforming other models like LLaMA and Stanford Alpaca in more than 90%* of cases.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

Why use ChatGPT-4 to evaluate the ‘quality’ of the responses?

My understanding is that all the Large Language Models ‘hallucinate’, so their ‘judgement’ on anything is not to be trusted.

These things are quickly getting into the possibly-embedded territory. Vicuna is like 7.6 GB in size and requires just a bit more to run.

It’s not unthinkable to have all the quantized weights as fused on values in a FPGA or ASIC, with an efficient and low power consuming weight calculation architecture.

Such a thing would give since-power-on embedded LLM capabilities to any device, for example, to a server remote management engine. With appropriate prompt training as shown above, it could be an expert on troubleshooting the device’s many potential issues, regardless of the state of the OS.

Meta is being silly for not to fully open sourcing LLaMa and all of its derivatives.

I sent you an email. Wanted to connect and discuss this topic.

Jean-Baptiste, what I would like to see is an AI assistant for a role like a Peace Corps volunteer or community organizer working in a setting where literacy rates are perhaps 1/3 or less, where the people do not have access to electricity and clean water is a serious, not easily met need. Think of a community organizer as presented by Saul Alinksy in Rules for Radicals.

It seem that a chat-bot could be trained to provide how to answers in a form understandable by the community which may have smart phones, but not be literate in English. Visual and audio interface would be needed as well as the capacity to call up instructional videos.

Can Vicuna be used to create this?

I am doing proof of concept of idea described in your comment, feel free to contact.

I am intrigued by this idea as literacy/education, income inequality, gentrification, etc. are close to my heart. Any way we can connect on this project?

I think this is where we’re headed and would give awesome capabilities in communication…