A superintelligence is a hypothetical agent that possesses intelligence far surpassing that of the brightest and most gifted human minds. “Superintelligence” may also refer to a property of problem-solving systems (e.g., superintelligent language translators or engineering assistants) whether or not these high-level intellectual competencies are embodied in agents that act in the world. A superintelligence may or may not be created by an intelligence explosion and associated with a technological singularity.

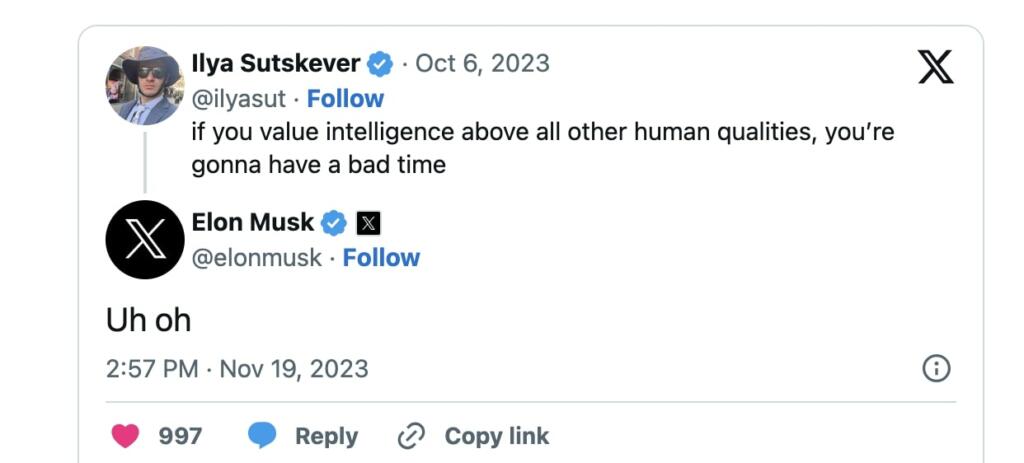

Tweets from Elon Musk and OpenAI chief scientist Ilya suggest that the OpenAI company control chaos is over the emergence of Artificial Super Intelligence.

Elon is very worried. He says Ilya has a good moral compass and does not seek power. He would not take such drastic action unless he felt it was absolutely necessary.

Elon Musk knows everyone involved at OpenAI and funded OpenAI with a billion dollars.

It also seems like Elon is trying to win Ilya Sutskever over to xAI.

I am very worried.

Ilya has a good moral compass and does not seek power.

He would not take such drastic action unless he felt it was absolutely necessary.

— Elon Musk (@elonmusk) November 19, 2023

2x uh oh https://t.co/HTuakbnzTM

— Jeff Lutz 🔋 (@thejefflutz) November 19, 2023

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

I bet Altman was using it to build weapons systems.

What was the rumored “large discovery” at OpenAI two weeks ago? Was this the reason behind the palace coup?

Wasn’t Ilya Sustkever who told Verge that open-sourcing AI “is not wise?”

Oh, here it is:

Well, it’s been a few years since OpenAI started moving in a closed-source direction and I don’t think that it’s obvious at all that open–sourcing AI isn’t wise. I guess if you were on the inside, it might be obvious.

Normally I agree with Elon’s takes. In this, I flat out disagree with him. Ilya doesn’t have a good moral compass. He’s a technopriest, and thinks that he can steward this technology for us since he has a PhD and Joe Sixpack doesn’t. Maybe he has good intentions, maybe he is fooling himself, but I say this with all the love in my heart: I hope those guys never work in this field again (although it seems I won’t get my wish).

My personal impression of Sam Altman was that he was totally focused on the best future for Sam Altman. This is based on what he did with OpenAI and things he said, but I could be wrong. In any case with something as potentially critical as AI can we trust him? Clearly the board felt he was not being candid with them to the extent that they lost trust in him.

“if you value intelligence above all other human qualities, you’re

gonna have a bad time”

You can say the same thing when calculators and printing presses are invented. You replace an intelligent human with a non-intelligent thing and if you are obsessed with the human intelligence you are going to have a bad time. It doesn’t mean you have invented AGI.

You simply don’t fire a CEO on a whim.

But if so, the speed would be crazy, AGI in only one year since the launch of ChatGPT?

Exactly no one predicted that.

https://www.genolve.com/design/socialmedia/memes/Will-an-AGI-Take-Over-the-World