Stephen Wolfram’s conclusions:

00:49 Won’t AI Eventually Be Able to Do Everything?

AI cannot solve science but it can contribute to speeding up science

Science was transformed by math centuries ago.

What can we expect AI to be able or unable to do for science?

5:01 The Hard Limit of Computational Irreducibility

This constrains what AI can do. Stephen is narrowing his definition of AI to machine learning and LLM like systems.

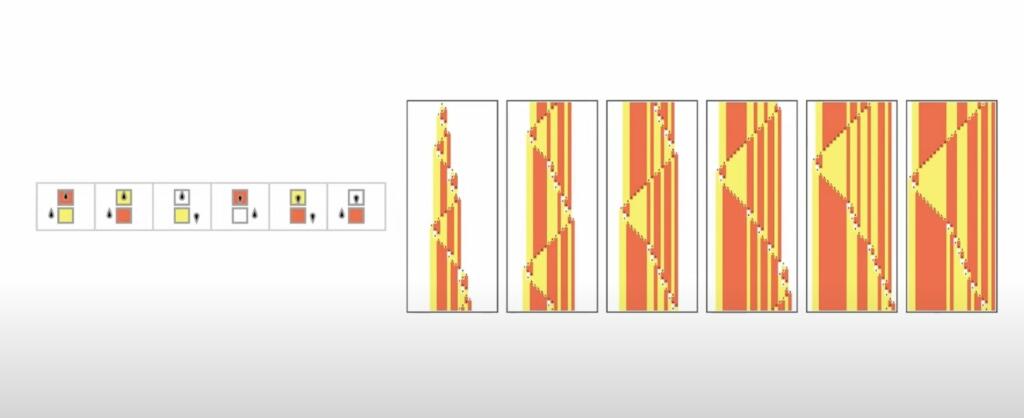

9:45 Things That Have Worked in the Past

Stephen reviews some of his work with cellular automata and other enumeration discoveries he made.

14:34 Can AI Predict What Will Happen?

23:47 Predicting Computational Processes

29:10 Identifying Computational Reducibility

40:23 AI in the Non-human World

51:14 Solving Equations with AI

57:09 AI for Multicomputation

1:09:28 Exploring Spaces of Systems

1:17:49 Science as Narrative

1:28:57 Finding What’s Interesting

1:47:40 Beyond the “Exact Sciences”

1:53:58 So… Can AI Solve Science?

He thinks ML/AI is fundamentally limited but combining it with formal systems and methods will provide good value.

1:58:49 Q&A

2:33:16 End stream

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

How could he have gone through those examples regarding the sine wave without discussing recurrent neural nets?

Science is an abstraction, created by humans to describe, to interpret and to understand the world as we see it, but science is not always right, and we have seen science make huge leaps, from a flat world view to quantum theory. There is no reason to believe there might not be more leaps coming.

AIs, while not having been trained to do science, can already make better weather predictions than the current scientific weather models can do. What it tells us is that AIs do not first need to understand science to do a better job, but that it would be wiser to allow AIs to create their own abstraction of the world, not to insist on ours, because if we truly want to improve science do we need to allow ourselves to be wrong about it, and this will only be possible when we do not make science into a limitation.